Virtualized network interfaces deliver sufficient performance for most workloads. But when a VM requires bare-metal network throughput — such as an OPNsense firewall, a storage gateway running iSCSI, or a packet-processing appliance — PCIe passthrough is the answer. It assigns a physical PCI Express device directly to a VM, bypassing the virtual network bridge entirely.

Prerequisites

Hardware Requirements

PCIe passthrough requires IOMMU support — a CPU and chipset feature that partitions physical devices into isolated groups:

- Intel: VT-d (Virtualization Technology for Directed I/O)

- AMD: AMD-Vi (AMD I/O Virtualization Technology)

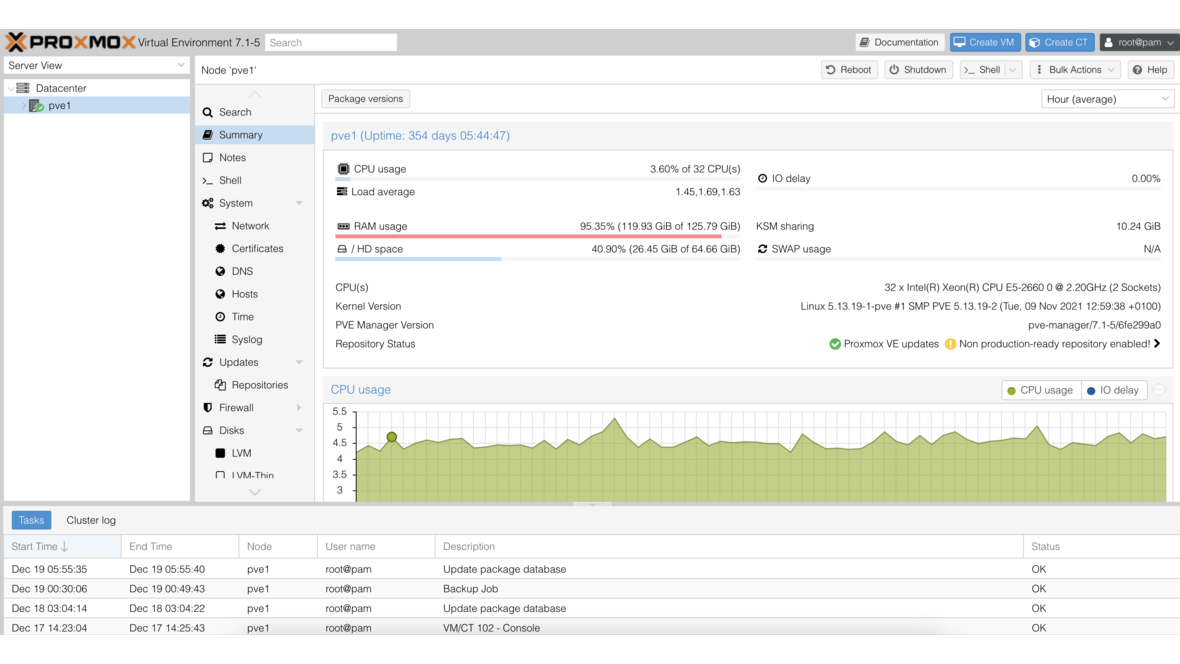

Not every motherboard-CPU combination fully supports IOMMU. Server hardware (Xeon, EPYC) generally provides the best compatibility. Consumer boards sometimes have unusable IOMMU groups where too many devices are lumped together.

BIOS/UEFI Settings

Enable the following in BIOS:

- Intel VT-d or AMD IOMMU (depending on CPU)

- Above 4G Decoding (relevant for GPUs, usually not needed for NICs)

- ACS Override (if available — improves IOMMU group separation)

Enabling IOMMU in the Kernel

Setting Kernel Parameters

Edit the GRUB configuration:

# For Intel CPUs

nano /etc/default/grub

# GRUB_CMDLINE_LINUX_DEFAULT="quiet intel_iommu=on iommu=pt"

# For AMD CPUs

# GRUB_CMDLINE_LINUX_DEFAULT="quiet amd_iommu=on iommu=pt"The iommu=pt (passthrough mode) parameter ensures that only devices actually being passed through incur the IOMMU overhead. All other devices operate normally.

If Proxmox was installed with systemd-boot:

# Edit the file

nano /etc/kernel/cmdline

# Content: root=ZFS=rpool/ROOT/pve-1 boot=zfs quiet intel_iommu=on iommu=pt

# Update boot configuration

proxmox-boot-tool refreshLoading VFIO Modules

Create a configuration file for the required kernel modules:

cat <<EOF > /etc/modules-load.d/vfio.conf

vfio

vfio_iommu_type1

vfio_pci

EOFUpdate the initramfs and reboot:

update-initramfs -u -k all

rebootVerifying IOMMU Activation

After rebooting, verify that IOMMU is active:

dmesg | grep -e DMAR -e IOMMU

# Expected output includes:

# DMAR: IOMMU enabled

# DMAR: Intel(R) Virtualization Technology for Directed I/OAnalyzing IOMMU Groups

Every PCI device belongs to an IOMMU group. When passing through a device, the entire group is assigned to the VM — not just a single device. This makes it critical that the target network card sits in its own or a small group.

#!/bin/bash

# Display IOMMU groups

for d in /sys/kernel/iommu_groups/*/devices/*; do

n=${d#*/iommu_groups/*}

n=${n%%/*}

printf "IOMMU Group %s: " "$n"

lspci -nns "${d##*/}"

done | sort -t: -k1 -nTypical output for a dedicated Intel NIC:

IOMMU Group 14: 03:00.0 Ethernet controller [0200]: Intel Corporation I350 Gigabit Network Connection [8086:1521] (rev 01)

IOMMU Group 14: 03:00.1 Ethernet controller [0200]: Intel Corporation I350 Gigabit Network Connection [8086:1521] (rev 01)If the NIC shares a group with the root port or other essential devices, you need an ACS override patch or must try a different PCIe slot.

Reserving the Device for vfio-pci

To prevent Proxmox from binding the device with its default driver, explicitly reserve it for vfio-pci:

# Identify PCI IDs of the network card

lspci -nn | grep -i ethernet

# Output: 03:00.0 Ethernet controller [0200]: Intel Corporation I350 [8086:1521]

# Configure vfio-pci for this vendor:device ID

echo "options vfio-pci ids=8086:1521" > /etc/modprobe.d/vfio.confWith multiple identical cards (same vendor:device ID), all cards with that ID are bound to vfio-pci. To pass through only a specific card, use the PCI address instead:

# Bind specific device by address

echo "options vfio-pci ids=8086:1521" > /etc/modprobe.d/vfio.conf

# Blacklist the default driver (if needed)

echo "blacklist igb" >> /etc/modprobe.d/blacklist.confUpdate initramfs and reboot:

update-initramfs -u -k all

rebootVerifying the Binding

lspci -nnk -s 03:00.0

# Kernel driver in use: vfio-pciIf vfio-pci is shown as the driver, the device is correctly reserved.

Configuring Passthrough in the VM

Via the Web Interface

- Open the VM configuration in Proxmox VE

- Hardware > Add > PCI Device

- Select the desired device from the list

- Enable All Functions (for multi-port cards)

- Enable PCI-Express (for PCIe devices, not legacy PCI)

- Set the Machine Type to

q35(under Options > Machine)

Via the Configuration File

# /etc/pve/qemu-server/<VMID>.conf

machine: q35

hostpci0: 03:00,pcie=1For multi-port cards with multiple functions:

hostpci0: 03:00.0;03:00.1,pcie=1BIOS Type and Machine Type

PCIe passthrough requires the q35 chipset. The older i440fx chipset does not support native PCIe. Also choose OVMF (UEFI) instead of SeaBIOS as firmware — OVMF provides better PCIe compatibility.

SR-IOV: Virtual Functions Instead of Full Passthrough

Single Root I/O Virtualization (SR-IOV) splits a physical network card into multiple Virtual Functions (VFs), each of which can be passed through to different VMs. Unlike full passthrough, the Physical Function (PF) remains with the host.

Enabling SR-IOV

# Check if the card supports SR-IOV

lspci -vvv -s 03:00.0 | grep -i "sr-iov"

# Enable virtual functions (example: Intel X710)

echo 4 > /sys/class/net/ens1f0/device/sriov_numvfs

# Make persistent

cat <<EOF > /etc/udev/rules.d/10-sriov.rules

ACTION=="add", SUBSYSTEM=="net", KERNELS=="0000:03:00.0", \

ATTR{device/sriov_numvfs}="4"

EOFAfter activation, the VFs appear as separate PCI devices:

lspci | grep -i virtual

# 03:02.0 Ethernet controller: Intel Corporation X710 Virtual Function

# 03:02.1 Ethernet controller: Intel Corporation X710 Virtual FunctionEach VF can be passed through to a VM like a regular PCI device — with its own MAC address, VLAN, and QoS profile.

SR-IOV Advantages

| Feature | Full Passthrough | SR-IOV |

|---|---|---|

| VMs per card | 1 | Up to 64 VFs |

| Host access | No | Yes (PF stays with host) |

| Live migration | No | Limited (bond failover) |

| Performance | Native | Near-native |

| Hardware requirement | Any PCIe card | SR-IOV capable card |

Passing Through HBAs (Storage Controllers)

For TrueNAS or ZFS VMs, passing through an HBA (Host Bus Adapter) in IT mode is essential. ZFS requires direct access to the disks — a hardware RAID controller in between prevents ZFS from managing its own data integrity.

# Identify the HBA

lspci -nn | grep -i "SAS\|SCSI\|LSI"

# 01:00.0 Serial Attached SCSI controller [0107]: Broadcom / LSI SAS3008 [1000:0097]

# Reserve for vfio-pci

echo "options vfio-pci ids=1000:0097" >> /etc/modprobe.d/vfio.conf

update-initramfs -u -k all

rebootTroubleshooting

VM Fails to Start

Error: vfio: failed to set up container

- Verify IOMMU is correctly enabled (

dmesg | grep IOMMU) - Ensure all devices in the IOMMU group are bound to vfio-pci

Error: BAR resources not available

- Enable Above 4G Decoding in BIOS

- Use q35 as Machine Type

Unstable Network Performance

- Check interrupt assignment:

cat /proc/interrupts | grep vfio - Configure CPU pinning for the VM to avoid NUMA crossing

- For Intel cards: check interrupt moderation (

ethtool -c <interface>)

IOMMU Group Too Large

When multiple devices are grouped together:

- Move the card to a different PCIe slot

- Use the ACS override patch (kernel parameter

pcie_acs_override=downstream,multifunction) - Use server hardware with better IOMMU support

Monitoring with DATAZONE Control

DATAZONE Control monitors VMs with passed-through PCI devices: network throughput, latency, and error counters from physical NICs feed into the central dashboard. For SR-IOV setups, both the Physical Function and all Virtual Functions are tracked individually — including alerting on link-down events or rising error counters.

Planning PCIe passthrough for your Proxmox environment? Contact us — we advise on hardware selection and configure passthrough and SR-IOV for maximum performance.

More on these topics:

More articles

Backup Strategy for SMBs: Proxmox PBS + TrueNAS as a Reliable Backup Solution

Backup strategy for SMBs with Proxmox PBS and TrueNAS: implement the 3-2-1 rule, PBS as primary backup target, TrueNAS replication as offsite copy, retention policies, and automated restore tests.

Proxmox Notification System: Matchers, Targets, SMTP, Gotify, and Webhooks

Configure the Proxmox notification system from PVE 8.1: matchers and targets, SMTP setup, Gotify integration, webhook targets, notification filters, and sendmail vs. new API.

Proxmox Cluster Network Design: Corosync, Migration, Storage, and Management

Design Proxmox cluster networks: Corosync ring, migration network, storage network for Ceph/iSCSI, management VLAN, bonding/LACP, and MTU 9000 — with example topologies.